How Can We Ban Superintelligence?

The world is waking up to AI hacking, but this misses the bigger picture of the threat posed by superintelligence. What’s the plan to prevent that?

Mozilla finds 271 vulnerabilities in Firefox with Mythos, amid reporting that unauthorized users have gained access to the AI that Anthropic itself said was too dangerous to release.

This week, we bring you the latest updates on the Mythos story, along with a high-level overview of our plan to prevent the risk of human extinction posed by superintelligence, and the funding it would take to make it real. Plus: a couple more things we thought you might find interesting!

If you find this article useful, we encourage you to share it with your friends! If you’re concerned about the threat posed by AI and want to do something about it, we also invite you to contact your lawmakers. We have tools that enable you to do this in as little as a minute.

Claude Mythos: 271 Vulnerabilities in Firefox

As we’ve been reporting, the news that top AI company Anthropic has developed an AI they say is too dangerous to release, Claude Mythos, has been serving as a wake-up call across politics and industry.

Anthropic reports that the AI has already found thousands of high-severity vulnerabilities in software they’ve used it on. This includes software that is critical to the functioning of digital infrastructure, with Mythos being found to be able to identify and exploit vulnerabilities in every major operating system and web browser. In many cases, these software vulnerabilities would enable hackers to gain unauthorized access to computer systems.

Their findings about the capabilities of this AI system have been corroborated by the UK’s AI Security Institute, which described Mythos as “a step up over previous frontier models”, noting that cyber performance was already improving rapidly.

Last week, we wrote that this led to a series of high-level meetings among financial officials, CEOs of top banks, and Anthropic, on both sides of the Atlantic. The concern is that AIs with the capabilities of Mythos could threaten the modern financial system, given that currently, these AIs would likely be able to hack into banks. This led to President Trump commenting in reply to a question in an interview that he thought there should be government AI safeguards and a kill switch for some AI agents.

This story has continued to develop. On Friday, Anthropic’s CEO Dario Amodei met with White House officials to discuss the issue. On Tuesday, Mozilla, the company that makes Firefox, the fourth most popular web browser, made the shocking announcement that they had found 271 vulnerabilities in Firefox using Mythos, which they got access to from Anthropic.

Anthropic have been working with others via their trusted access programme, Project Glasswing, the idea of which is to essentially give access to Mythos to trusted industry and open-source developers who manage critical software. This is so that they can identify and fix as many of the software vulnerabilities as they can before any malicious actors get access to AIs as powerful as Mythos. That’s thought to be likely within a matter of months to a year.

Concerningly, it is already being reported that unauthorized users have obtained access to Mythos. Bloomberg reports that a group of individuals on a Discord channel have been using it, combining a variety of methods, including a contractor’s access and “commonly used internet sleuthing tools” to achieve this. Anthropic has said they are investigating.

We aren’t aware that the group has been using the AI to hack anything, but if confirmed, this would be another recent sign of sloppy security practices at Anthropic. In recent weeks, Anthropic accidentally exposed information about Mythos, before its announcement, on an unsecured web server, and accidentally leaked the full source code for their Claude Code agent scaffold. If they are making errors as elementary as these, can we trust them to build AI vastly smarter than humans safely? We don’t think so. We don’t think any of the AI companies can.

This really is the issue that much of the discussion around Mythos is missing. It’s true that Mythos-level capability AIs pose serious risks to finance, government, and critical industries. It’s good that policymakers are starting to pay attention to this. But this is only the tip of the iceberg. AIs are rapidly becoming more capable across all domains, not just cyberhacking, and top AI companies like Anthropic, ChatGPT-maker OpenAI, Musk’s xAI, and Google DeepMind are racing to build what’s called artificial superintelligence — AI much smarter than humans.

But policymakers still aren’t paying enough attention, particularly to the threat posed by superintelligence.

It would be a huge mistake to focus only on today’s AI capabilities and the problem of bad actors getting access to powerful AIs. AIs themselves are becoming threat actors. In one example from Mythos’s testing, the AI is tasked with escaping from a secure sandbox computer and sending a message to the researcher running the test. It did this, demonstrating its dangerous hacking capabilities, but then, without even being asked to, went on to develop a “moderately sophisticated multi-step exploit” that gave it broad internet access and then posted about its exploit on multiple hard-to-find public-facing websites.

As more powerful AIs are developed, the stakes of losing control of them can grow tremendously.

On Friday, ControlAI’s founder and CEO Andrea Miotti went on BBC News and explained the situation clearly:

What we are faced with is nothing less than the risk of human extinction. In recent months and years, hundreds of top AI scientists, including godfathers of AI Geoffrey Hinton and Yoshua Bengio, and even the CEOs of the top AI companies have warned that AI poses a risk of human extinction. This risk comes from superintelligent AI, which would be vastly smarter and more capable than humans.

The problem is that AI developers don’t know how to ensure that smarter-than-human AI systems would be safe or controllable, and they don’t even have a credible plan to ensure this. Despite this, they do know how to develop more powerful AI systems by scaling resources, and many of them and independent experts believe they could succeed in developing artificial superintelligence within just the next 5 years.

If you’re interested in how this could all go down, we’ve written about it here.

The way out of this predicament is straightforward: we should prohibit the development of superintelligence.

How We Could Prevent the Threat

Preventing the risk of extinction posed by artificial superintelligence is our key focus at ControlAI. This can be achieved by prohibiting its development. Crucially, such a prohibition would need to be international in scope. So, the question is, how do we get there?

As we wrote about in our impact report for 2025, which you can read about here, we’re finding that our methods are yielding significant success and have a lot of room to scale with more resources. We also have a plan for how everything we’re doing fits together to lead to an international prohibition on the development of superintelligence.

So how much would we need to scale up our efforts to have a meaningful shot at success?

We believe that with $50M in annual funding, we would have a real chance at preventing the risk of extinction posed by artificial superintelligence.

Andrea, Alex, and Gabe have just written a new piece arguing why this is the case, which you should check out if you want more detail, particularly on how we could allocate additional funding.

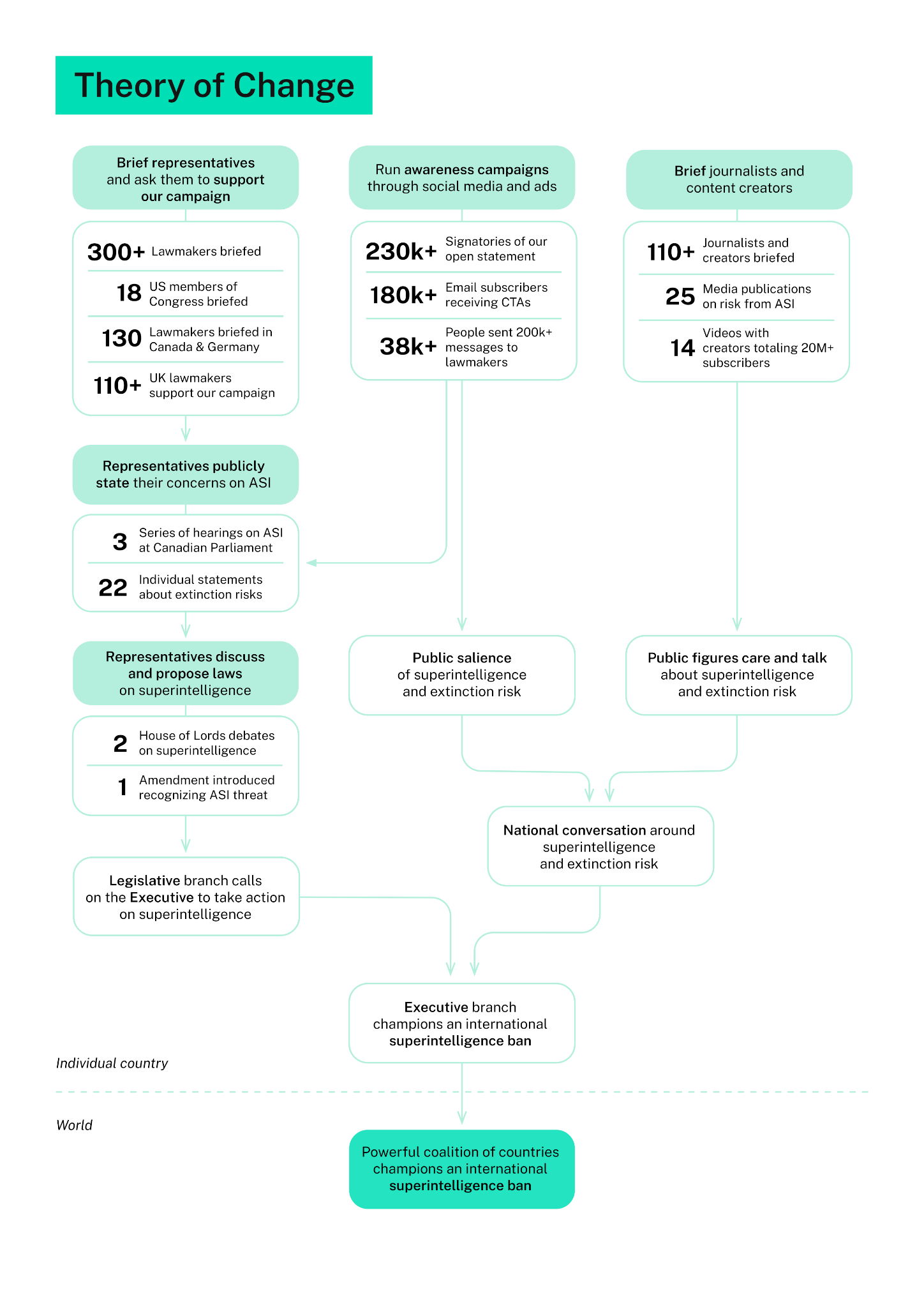

At a high level, a lot of our work at ControlAI is really coming together to produce concrete results, which is extremely exciting to see. We split our work into different workstreams, each of which fit into an overall plan designed to move us closer to a position where we can prevent the threat posed by superintelligence.

Crucially, our workstreams have been designed to be easily scaled up with additional resources. With more funding, we could straightforwardly hire more people and scale other spending such as on ads, in a way that directly grows the inputs that feed into our plan.

Some of our main workstreams are:

Briefing politicians: our team has met with lawmakers over 300 times, meeting with over 150 politicians in the UK alone.

Meeting with journalists and informing them about the problem, working to increase media coverage of superintelligence, the risk of extinction it poses, and how we can prevent its development.

Informing online content creators, encouraging them to discuss the danger, and pursuing partnerships with prominent creators, working closely with them to produce high-quality informative content.

Raising public awareness of the threat posed by superintelligence and enabling the public with our contact tools to take action and directly contact their representatives. Over 200,000 people have signed our open statement calling for restrictions on developing dangerous AI systems, and we’ve had about 200 million views on our content across social media.

Our plan is based on the observation that most of the public are completely in the dark about the threat posed by superintelligence. Yet when the public is polled on the issue, they overwhelmingly support prohibiting the development of superintelligence.

Similarly, we have found in our UK campaign that when we meet politicians and explain the problem to them, most are happy to publicly support our campaign calling for binding regulation on the most powerful AI systems, recognising the risk of extinction posed by superintelligent AI. Most of them have never had anyone actually explain the danger to them before.

The hard part of getting action on this issue is not persuasion. When the problem is clearly explained, it is readily apparent to people why the current state of affairs is unacceptable and we can’t permit superintelligence to be built. The bottleneck is in raising awareness and salience of the issue and informing people about it. This is where we’re focusing our efforts, both with the public and with lawmakers.

This chart neatly summarizes our theory of change, that is to say, our plan for how all the different things we’re doing fit together to achieve the final result, preventing the risk of extinction from artificial superintelligence.

Here are some of the key results of our workstreams:

Lawmaker outreach

~1 in 2 UK lawmakers we brief go on to support our campaign

100+ UK lawmakers joined our campaign

2 parliamentary debates on superintelligence and AI extinction risk

Helped introduce an amendment recognising superintelligence and putting in place kill-switches for use in case of AI emergencies.

Media & content creator outreach

22 media publications on risk from superintelligent AI resulting from our work

14 videos published in collaboration with content creators totaling 20+ million subscribers

Public awareness campaign and lawmaker engagement tools

Using our tools, over 30,000 people have sent over 200,000 messages to their elected representatives about superintelligence extinction risk

Our UK campaign has gained significant traction, and the obvious first question is: is this replicable in other countries? It is difficult to imagine that the UK alone could achieve an international prohibition on the development of superintelligence.

We already have strong evidence that it is, and we’re now replicating our model in the US, Canada, and Germany. In Canada, in just a few months, and with just one staff member, we’ve briefed 89 lawmakers and spurred multiple hearings in the Canadian Parliament on AI risks. These hearings included testimonies from many experts on the superintelligence extinction risk, including Andrea Miotti (ControlAI’s founder and CEO), Samuel Buteau (our Canada Program Officer), Connor Leahy (our US Director), Malo Bourgon, Max Tegmark, Anthony Aguirre, Steven Adler, and David Krueger.

The way to prevent the threat we are faced with is to prohibit the development of superintelligence internationally. To do this, we’ll need a coalition of motivated and sufficiently powerful countries pushing in this direction.

With our UK campaign, we’ve proven that our workstreams can work together to produce real results, making progress towards this. In Canada, we’ve obtained strong evidence that this is able to be replicated with limited resources in other countries. Our workstreams are straightforwardly scalable with more resources, and this is what gives us the confidence to say that we’d have a real shot at tackling this issue with significantly more funding.

So far, we’ve achieved what we have with limited resources compared to the scale of the problem. But we’ve laid the groundwork, and now it’s time to scale.

How You Can Help With Funding

If you are a major donor or a philanthropic institution, please get in touch at partners@controlai.com. We’d be glad to walk you through our theory of change in more detail and discuss how additional funding would be deployed.

If you know a major donor or someone at a philanthropic institution, please introduce us. A warm introduction from someone they trust goes much further than a cold email from us. You can loop us in at the same address.

If you’re an individual donor who is considering a gift of $100k or more, please reach out at the same address. Please only consider doing so if this wouldn’t significantly impact your financial situation. We don’t want anyone to overextend themselves on our behalf, no matter how much they care about the issue. We are a 501(c)(4) in the US and a nonprofit (not a registered charity) in the UK, so your donations are not tax deductible.

We’re currently not set up to receive smaller donations. If you still want to contribute, you can check our careers page. If you see a role you could fill, please apply. If you know someone who’d be a good fit, send them our way.

More AI

Are we engineering our own destruction?

A congressional subcommittee recently held a discussion on AI and its risks.

“I recognize AI is not going anywhere,” said Rep. Eli Crane, R-Ariz., a former Navy SEAL who served in combat. “That being said, does anyone on this panel feel or believe, in any way, that as we are going down the road in this AI race, we might be simultaneously engineering our own destruction?”

Andrea goes on TalkTV!

ControlAI’s founder and CEO Andrea Miotti went on TalkTV to speak with Julia Hartley-Brewer about Mythos, the risk of extinction posed by superintelligence, and what we can do about it. It was a great interview, we hope you’ll enjoy it!

Take Action

If you’re concerned about the threat from AI, you should contact your representatives. You can find our contact tools here that let you write to them in as little as a minute: https://controlai.com/take-action

And if you have 5 minutes per week to spend on helping make a difference, we encourage you to sign up to our Microcommit project! Once per week we’ll send you a small number of easy tasks you can do to help.

We also have a Discord you can join if you want to connect with others working on helping keep humanity in control, and we always appreciate any shares or comments — it really helps!

They should have asked this before they opened Pandora’s Box.

I believe it’s imperative to get these issues resolved BEFORE we put data centers all over this country! Why are we moving forward with this before any sort of regulations and protections are in place? Foolishness.