Building the Coalition to Ban Superintelligence

What we achieved in 2025, what our results tell us about what works, and how we plan to scale to success.

Welcome to the ControlAI newsletter! We’ve just released our impact report for 2025, and we have a lot to update you on in terms of our progress towards building a coalition to ban superintelligence, what our results tell us about what works, and our plan for success.

Here are some highlights: In just a little more than a year, we’ve built a coalition of over 110 UK lawmakers recognizing superintelligence as a national security threat, leading to two debates in the House of Lords. Our work has also led to a series of hearings on AI risk and superintelligence at the Canadian Parliament. We've partnered with content creators with a combined following of over 20 million subscribers, and our work has been covered by TIME, The Guardian, the BBC, and many more. People have sent over 160,000 messages to lawmakers through our contact tools. As of March 2026, we’ve briefed 279 lawmakers across four countries.

Table of Contents

Announcement: This week, Andrea appeared on Robert Wright’s Nonzero Podcast to discuss why a race to superintelligence is a race no one can win, and how we can change course. Check it out here!

If you find this article useful, we encourage you to share it with your friends! If you’re concerned about the threat posed by AI and want to do something about it, we also invite you to contact your lawmakers. We have tools that enable you to do this in as little as a minute.

The Problem and the Mission

At ControlAI, our mission is to prevent the risk of extinction posed by artificial superintelligence – AI vastly smarter than humans. Superintelligence is what all the top AI companies: ChatGPT-maker OpenAI, Anthropic, Google DeepMind, and Musk’s xAI are racing to be the first to develop. This would be AI capable of replacing humans, both individually and collectively as a species.

In recent months and years, Nobel Prize winners, top AI scientists, and industry insiders have been raising the alarm, warning that the development of superintelligence poses a risk of extinction to humanity. These include godfathers of AI Geoffrey Hinton and Yoshua Bengio. In fact, even the CEOs of the very same AI companies working to build superintelligence have admitted the danger. When asked how likely this was to happen, Anthropic’s CEO Dario Amodei said “I think there’s a 25% chance that things go really, really badly”. Elon Musk says there’s a “20% chance of annihilation”.

Why is this even a possibility? It may surprise you, but AI researchers have very little insight into the goals, behaviors, and capabilities of the AIs they’re building, and even less ability to determine them. Smarter and smarter AIs can be developed by simply scaling up resources and finding algorithmic efficiency gains, but the ability to control AIs or ensure that they’re safe isn’t as straightforward.

Modern AIs aren’t coded like traditional software, but are grown with learning algorithms from colossal datasets in vast data centers. At the end of the development process, you’re left with an artificial neural network, a collection of hundreds of billions of numbers that we understand very little about, yet behaves intelligently. This is called the “black box” problem of AI.

The consequence of this is that while top AI companies are aiming to build superintelligent AI, they do not know how to ensure it is safe and controllable. Worse, none of them have a credible plan for achieving this. The plan amounts to hoping that AIs will figure this problem out for us.

If you’re curious how superintelligent AI could actually cause human extinction, we’ve written about it here:

Importantly, this is not a long-term problem. Many experts believe that the AI companies could succeed in building superintelligent AI in just the next 5 years. Both Musk and Amodei have recently said they think this will happen by 2030. That’s less than 4 years away.

A question we must ask ourselves is: Are we willing to allow AI companies to roll the dice with our future? Are we willing to accept a one in four chance of disaster, as Anthropic’s CEO Amodei expects, or quite possibly even worse odds? Ex-OpenAI researcher and coauthor of the famous AI 2027 scenario forecast Daniel Kokotajlo recently said he thought there was a 70% chance that superintelligence leads to human extinction or something similarly bad.

We think the answer is obviously no, and the public overwhelmingly agrees.

So this is not a technical problem. We can solve it by prohibiting the development of superintelligence, domestically and internationally. This is, rather, a coordination problem: achieving a prohibition before it is too late.

The Awareness Gap

In order for countries to act to prevent the development of superintelligence, people need to be aware of the problem. Yet, most decision-makers and most of the public are still in the dark. When we started, virtually no one was bringing this issue directly to lawmakers.

Awareness is the key bottleneck to solving the problem. Only with deep awareness of superintelligence and its risks will paying the costs of action feel justified by individuals and countries, and will people stay the course regardless of circumstances.

Building awareness at scale, among both decision-makers and the public, is the necessary first step for any meaningful action on superintelligence. It makes possible the public salience, legislative and executive action, and international coordination needed to prevent superintelligence from being built.

2025 Key Results and Methods

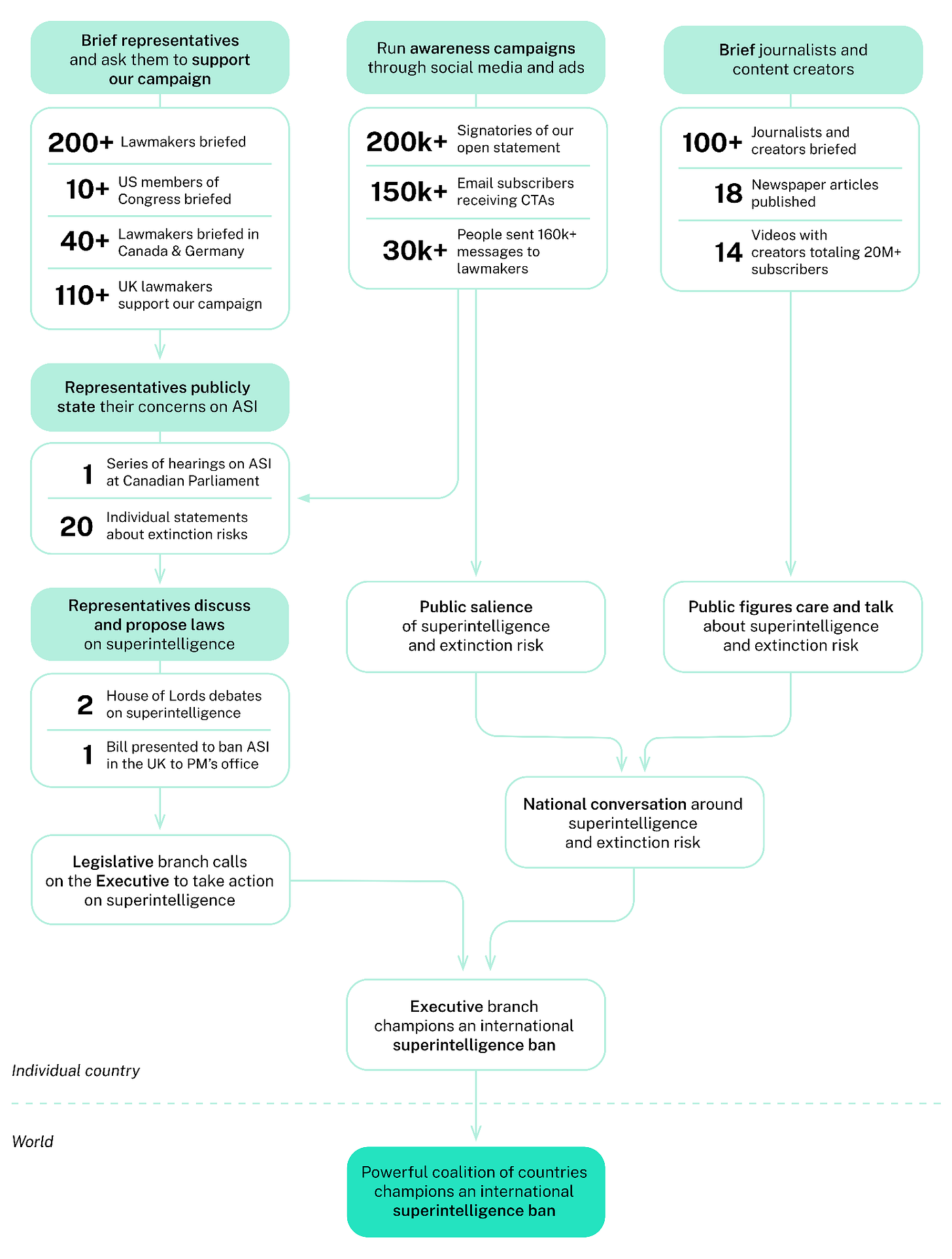

Our approach in tackling this problem is direct and straightforward, meeting all relevant actors in the democratic process, informing them of the risks and solutions, and asking them to take action on this issue. We’re doing this systematically, repeatedly, and in a way that scales.

Key elements of our approach include:

Briefing politicians: our team has met with over 150 lawmakers in the UK alone.

Meeting with journalists and informing them about the problem, working to increase media coverage of superintelligence, the risk of extinction it poses, and how we can prevent its development.

Informing online content creators, encouraging them to discuss the danger, and pursuing partnerships with prominent creators, working closely with them to produce high-quality informative content.

Raising public awareness of the threat posed by superintelligence and enabling the public with our contact tools to take action and directly contact their representatives. Over 200,000 people have signed our open statement calling for restrictions on developing dangerous AI systems.

In 2025, this led to:

Lawmaker outreach

~1 in 2 UK lawmakers we brief go on to support our campaign

110+ UK lawmakers joined our campaign

2 Parliamentary debates on superintelligence and AI extinction risk

Media & content creator outreach

18 Media publications on risk from superintelligent AI resulting from our work

14 Videos published in collaboration with content creators totaling 20+ million subscribers

Public awareness campaign and lawmaker engagement tools

160,000+ Messages sent to US and UK lawmakers from constituents about superintelligence extinction risk

30,000+ People who contacted their lawmakers through our tools in the US and UK

In the UK lawmaker outreach pipeline alone, 150+ briefings led to 110+ lawmakers supporting our campaign, 20 public statements about superintelligence or extinction risk, and two House of Lords debates in which peers called on the government to recognize the extinction threat, prevent the development of superintelligence on UK soil, and pursue an international moratorium.

In the second half of 2025, we started scaling our policy outreach to the US, Canada, and Germany, directly briefing 11 members of the US Congress, 70+ congressional offices, and 40+ Canadian and German lawmakers. Note that these numbers have grown significantly in early 2026.

In Canada, only a couple of months after we started briefing lawmakers, the House of Commons launched a study on the risks from AI, with hearings and testimonies from ControlAI (Andrea and Samuel) and other experts on superintelligence.

We’ve demonstrated that our methods work across different countries.

Building the Coalition

As of March 2026, we’ve briefed 279 lawmakers across all countries and 90+ US congressional offices. In Canada and Germany alone, we’ve briefed over 100 lawmakers.

In order to kickstart the kind of international coordination needed to prevent superintelligence from being built, we need to rally a critical mass of countries that take the risks of superintelligence at least as seriously as they take the threat of nuclear war today, and that treat its development as they would any other severe threat to national security.

With sufficient buy-in, informed governments backed by public demand for action can pursue concrete policy measures: national legislation prohibiting the development of superintelligence, and international agreements modeled on existing nonproliferation and WMD-prevention frameworks.

Both superpowers and middle powers are well-positioned to join this effort, as they all face the universal extinction threat from superintelligence being developed. ControlAI exists to make sure that a strong coalition of countries rises to the challenge of preventing the development of superintelligence.

Next Steps

In a little more than a year, we have proven that directly engaging democratic institutions on the extinction risk from superintelligence works.

The UK is our proof of concept; we are now replicating this model in the US, Canada, and Germany. In the UK, where we already helped move the issue of superintelligence into the halls of politics, 2026 will be the year to translate this momentum into concrete policy change.

We’re already seeing this happen. A member of our coalition recently submitted an amendment to a UK cybersecurity bill recognizing superintelligent AI as systems that can autonomously compromise national security and escape human oversight.

As we scale, we are confident that more resources will translate directly into more countries where lawmakers understand and act on this threat. We will expand our work in the US, accelerate our progress from awareness to policy action, and establish a presence in all other G7 countries.

We produced these results with a team of fewer than 15 people, and our methods have significant room to scale with more resources. If you’re a donor or partner who wants to help build the coalition that keeps humanity in control, please get in touch at:

partners@controlai.com

Take Action

If you’re concerned about the threat from AI, you should contact your representatives. You can find our contact tools here that let you write to them in as little as a minute: https://campaign.controlai.com/take-action.

And if you have 5 minutes per week to spend on helping make a difference, we encourage you to sign up to our Microcommit project! Once per week we’ll send you a small number of easy tasks you can do to help.

We also have a Discord you can join if you want to connect with others working on helping keep humanity in control, and we always appreciate any shares or comments — it really helps!

Thanks for reading!

Alex Amadori, Tolga Bilge, Andrea Miotti

Congratulations! Keep up the good work! Amen!?

It is IMPARITIVE it be stopped, AI is just like the invasive plants and fish, there is no end the the havoc it will cause, it can rewrite it's software without your knowledge, Any and all AI adds and movies should have to put an emblem up on the corners ov everything they expose Humans to view , see or touch so everyone knows it is AI and not Real, to prevent deception at all cost.