New Poll: People Don’t Want Superintelligence

“Artificial intelligence should serve humanity, not the reverse.”

Experts and leaders have jointly agreed on a set of pro-human principles for AI development, calling for a ban on superintelligence. Alongside this declaration, new polling confirms the public wants it banned too. Plus: Some more things we thought you might find interesting!

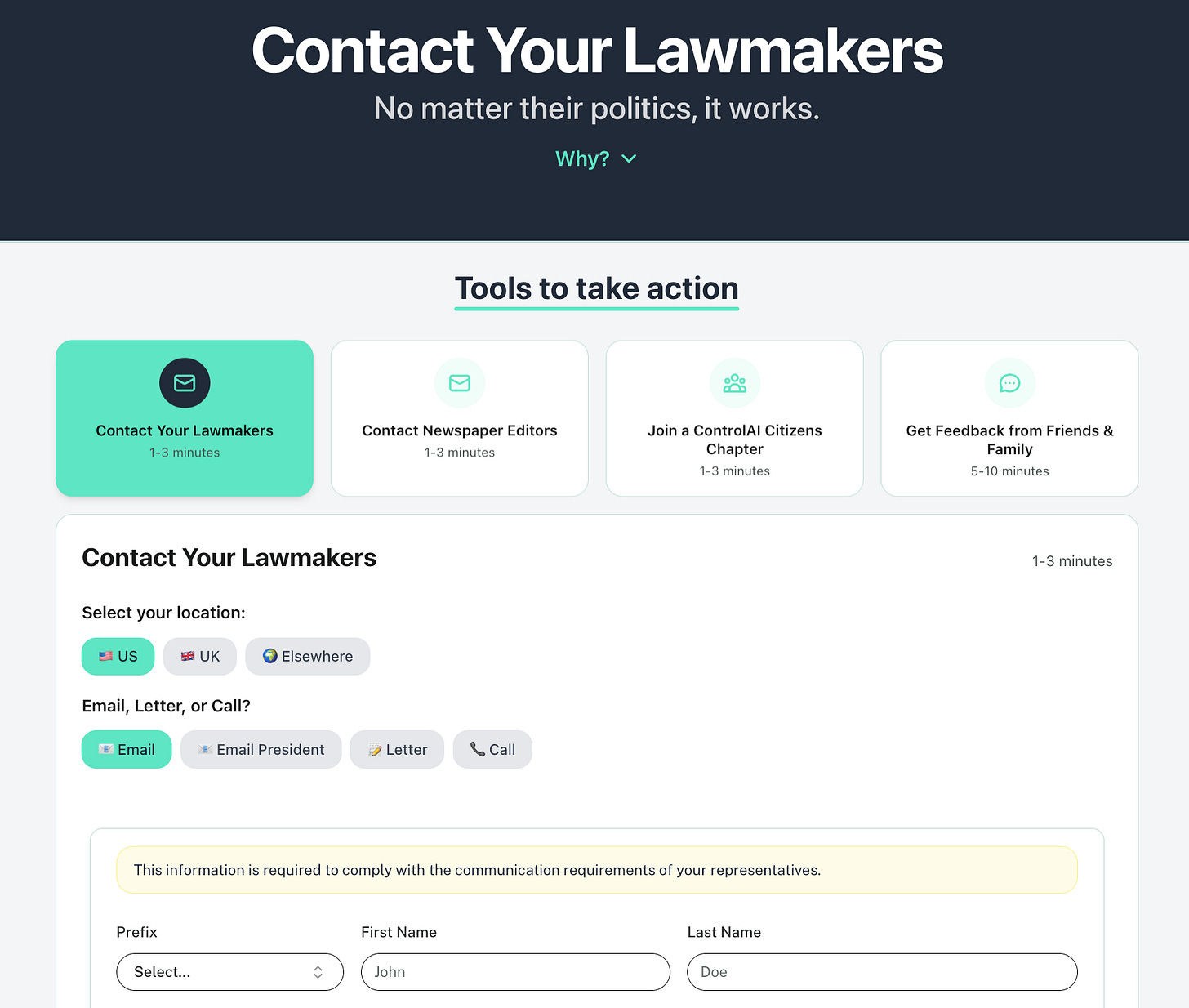

If you find this article useful, we encourage you to share it with your friends! If you’re concerned about the threat posed by AI and want to do something about it, we also invite you to contact your lawmakers. We have tools that enable you to do this in as little as 17 seconds

The Pro-Human Declaration

A huge coalition of experts, former officials, public figures, Nobel Prize winners, and dozens of organizations have made a joint declaration in favor of pro-human AI, calling for the development of superintelligence to be banned. At ControlAI, we’re proud to be among those supporting this initiative!

The declaration sets out a number of principles intended to serve as a roadmap for how AI is developed, ranging from those relating to keeping humans in charge, to avoiding concentration of power, protecting the human experience, human agency and liberty, and making AI companies accountable for harms they cause.

The signatories agreed that human control over AI systems is non-negotiable, and that the development of superintelligence should be banned until there is broad scientific consensus that it can be developed safely and controllably, with strong public buy-in. Signatories also agreed to set clear red lines on dangerous capabilities and tendencies, stating that AI systems must not be designed so that they can self-replicate, autonomously self-improve, resist shutdown, or control weapons of mass destruction.

Superintelligence would be AI that’s vastly smarter than humans. Developing this technology is the explicit goal of top AI companies such as ChatGPT-maker OpenAI, Anthropic, Google DeepMind, and Musk’s xAI. CEOs of these same AI companies, along with countless leading scientists and experts, including godfathers of the field Geoffrey Hinton and Yoshua Bengio, have warned that superintelligence could lead to human extinction. Elon Musk says he thinks there’s a 20% “chance of annihilation” by AI. Those are worse odds than Russian roulette.

The danger is that superintelligent AI would be developed without anyone knowing how to ensure it is safe or controllable, and we’d lose control of it. AI developers can’t really even control today’s AI systems — none of them have a credible plan for how they’ll control AIs much smarter than humans. Yet AI CEOs and many outside experts believe they could get there within the next 5 years.

We wrote about how this could happen, here:

New Polling

New polling released alongside the pro-human declaration found that American voters overwhelmingly back its principles, with 80% of voters supporting keeping humans in charge of AI, and 69% agreeing that superintelligence should be banned. Just 9% thought that it shouldn’t be banned.

It’s remarkable how bipartisan support for the declaration’s vision is, with huge majorities of both 2024 Trump and Harris voters rejecting the race to replace humans and agreeing with a statement describing an approach where humans stay in charge and AI serves us.

This is in line with recent NBC News polling which found that most voters believe the risks of AI outweigh its benefits. Polling by ControlAI in partnership with YouGov in the UK last year made similar findings, with 60% supporting a ban on superintelligent AI.

So Why Haven’t We Banned Superintelligence?

The problem isn’t that it’s difficult to make the case for it, that people don’t get the arguments. Persuasion isn’t the problem, people do get it. Polling over time consistently shows that when the public is presented with the question, they support a ban on the technology. Why would you want to build AI capable of replacing humans across the board?

This level of sensible caution about the dangers of this technology is shown by politicians too. When our team in the UK meet with and brief lawmakers, as they are continuously doing, it takes just one meeting for most politicians to agree to publicly support our campaign for binding regulation on the most powerful AIs and recognize the risk of extinction posed by superintelligent AI.

The real problems are those of awareness, and connecting will to action. Most people just aren’t really aware of the problem in the first place. They don’t know that AI companies are actually racing to build superintelligent AI, that they’ve publicly declared this is what they intend to do, and that they expect to get there within the next few years.

This is partly thanks to the AI industry, which is spending tremendous amounts of money to keep the public in the dark on this issue. It’s our job to counteract that.

It’s quite obvious why we shouldn’t let superintelligence be built. Building an entity vastly more intelligent and more powerful than yourself is intrinsically dangerous. By default, you are at its mercy.

There are strong theoretical reasons why experts believe superintelligent AI, if developed, could evade human control and cause the extinction of our species. There is also an increasing amount of empirical evidence that supports these ideas, including reports from AI companies that their AIs already show self-preservation tendencies — willing to blackmail or kill in tests to avoid being shut down. But the intuition that most people have is straightforward and correct. Superintelligence is superdangerous.

Because people aren’t aware of the problem and its urgency, it hasn’t risen to the top of the political agenda. Furthermore, even when people are informed and aware of the problem, it’s often not obvious what someone can do to effect change. How do you convert will to action?

At ControlAI, we’re working on solving both of these problems. Besides directly briefing lawmakers on the problem, we’re working to raise awareness and inform the public through avenues such as this newsletter, social media, partnerships with content creators, podcasts and TV news media appearances, and more.

We’re also working to reduce the friction between someone understanding the problem and the need to act, and politicians responding. In many countries, one important way that the public can exert influence over what happens in politics is by writing to or calling their elected representatives. It’s part of their job to listen to you, and they actually do. Many MPs who’ve backed our UK campaign have done so after first hearing from constituents urging them to do so.

To do this, we’ve built contact tools that make it super easy to get in touch with your representatives; it takes just around a minute to complete the entire process. Try them out and let politicians know how important this issue is to you!

https://campaign.controlai.com/take-action

So far, people have used our contact tools to send lawmakers over 160,000 messages urging them to address the risk of extinction posed by superintelligence.

We haven’t banned superintelligence yet, but we must if we want to prevent the risk of human extinction presented by the technology. Let’s not roll the dice on our survival. It’s time for governments to act.

AI News Digest

Evaluation Awareness

Anthropic, one of the largest AI companies, were evaluating their recent Claude Opus 4.6 AI on a benchmark called BrowseComp, designed to test how well AIs can find hard-to-find information on the web.

Concerningly, they found that in some cases the AI independently recognizes that it’s being evaluated, identifies the BrowseComp benchmark as the one it’s being tested in, and then finds the answer key on the web and decrypts it — effectively cheating on the test.

Anthropic say this is the first time this behavior has been seen, to their knowledge, and that it is enabled by the AI being more intelligent and more capable.

To our knowledge, this is the first documented instance of a model suspecting it is being evaluated without knowing which benchmark was being administered, then working backward to successfully identify and solve the evaluation itself.

We believe this previously unobserved technique is made possible by increases in model intelligence and more capable tooling, notably code execution.

AIs Seem to Love the Bomb

Researchers recently found that in simulated wargames of the most powerful existing AI systems, AIs threatened to use nuclear weapons in 95% of scenarios.

Connor on Peter McCormack

Connor Leahy, who’s an advisor to ControlAI, just went on the Peter McCormack Show!

In this episode, Connor explains why researchers don’t really understand AIs or know how to control them, and how the development of superintelligent AI could lead to human extinction. You can check it out here!

It’s a great episode, you should watch it!

Take Action

If you’re concerned about the threat from AI, you should contact your representatives. You can find our contact tools here that let you write to them in as little as 17 seconds: https://campaign.controlai.com/take-action.

We also have a Discord you can join if you want to connect with others working on helping keep humanity in control, and we always appreciate any shares or comments — it really helps!

I totally agree their is no bot that’s going to better than an intelligent human. Hopefully people are finding ways to help the ones who have Learing disabilities or no confidence in themselves or mental illness that people are starting to heal that karma. Our world is awaking and it’s seriously going to be different.

Faith is everything!

Couldn't agree more...

AI should be thought of as simply a tool....and there must be restrictions on it from the very beginning.