AI Beats Mathematicians

AI just solved a math problem that mathematicians couldn’t. This forms part of a broader trend of rapid capability advances, but the ability to control ever more powerful AIs remains elusive.

Welcome to the ControlAI newsletter! This week, it was confirmed that AI completely solved a difficult mathematics problem that multiple mathematicians had been unable to solve, adding to a growing body of evidence that AI capabilities are advancing rapidly in the dangerous race towards superintelligent AI. In this article, we’ll break this down and explain why the trajectory of AI development is keeping AI scientists up at night.

If you find this article useful, we encourage you to share it with your friends! If you’re concerned about the threat posed by AI and want to do something about it, we also invite you to contact your lawmakers. We have tools that enable you to do this in as little as 17 seconds.

FrontierMath

Because of the way that modern AIs are developed — grown, like animals, rather than coded by programmers — there’s no way to really know what an AI is capable of until after it’s been developed. For this reason, researchers develop benchmarks to try to test what AIs can do after they’ve been built.

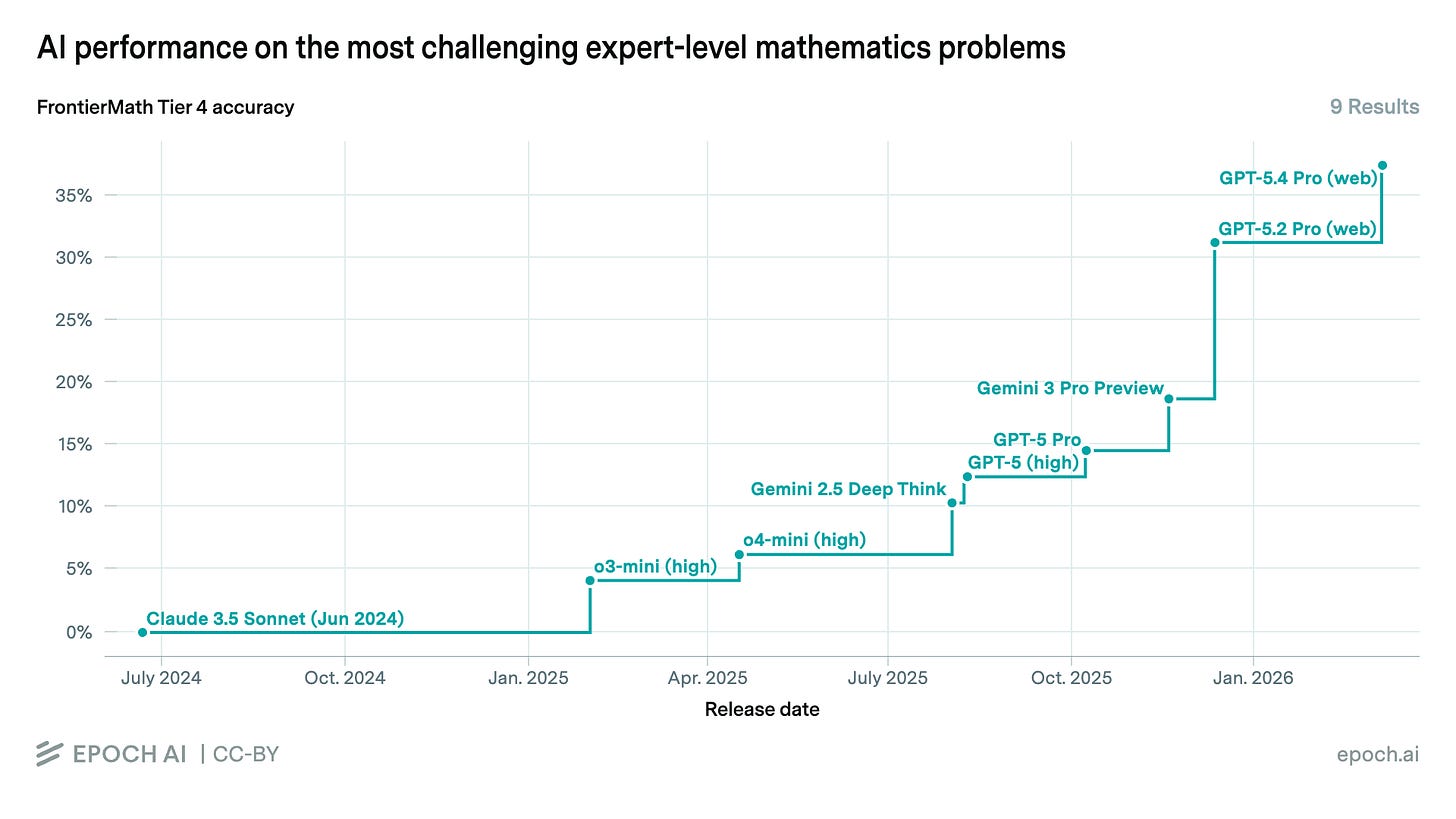

EpochAI, a nonprofit which studies the trajectory of AI development, maintains the FrontierMath benchmark, which consists of a few hundred unpublished difficult math problems. They’re organized into different tiers, where the highest tier represents research-level problems.

The historical trend is clear. The best AIs that were around in January 2025 could not solve a single one of the hardest problems Epoch had collected. Now, they have an accuracy of 38%.

Epoch also keeps a collection of open mathematical problems, problems that no mathematician has ever been able to solve. Until recently, AIs hadn’t been able to solve any of these, but that’s just changed.

This week, Epoch confirmed that one of these open problems has been solved by AI too. The problem, called “A Ramsey-style Problem on Hypergraphs”, had been attempted by at least 5 mathematicians and none of them had solved it.

Professor Will Brian, who confirmed the AI’s solution was correct, guessed that it would take an expert 1 to 3 months to actually solve it.

There is a common misconception about AIs that they’re unable to really reason, can’t generate anything new, and essentially just “average” the knowledge of humans. There are other reasons why this is false — we can actually just watch the AIs’ chain of thought as they reason — but this serves as a clear demonstration that AIs can go beyond existing human knowledge. As they get more powerful, we should expect this to happen more often.

Solving this problem wasn’t a huge breakthrough in the field of mathematics, but it seems like only a matter of time before real breakthroughs are made.

This development is yet more evidence of the rapid progress we’re seeing in AI capabilities, with systems becoming ever more powerful by the month.

The Overall Trend

The ability for AIs to solve actual research-level mathematics problems might sound surprising, but it isn’t for those who’ve been paying close attention. In recent years AI development has been characterized by a whirlwind of rapid advancements in capabilities across the board.

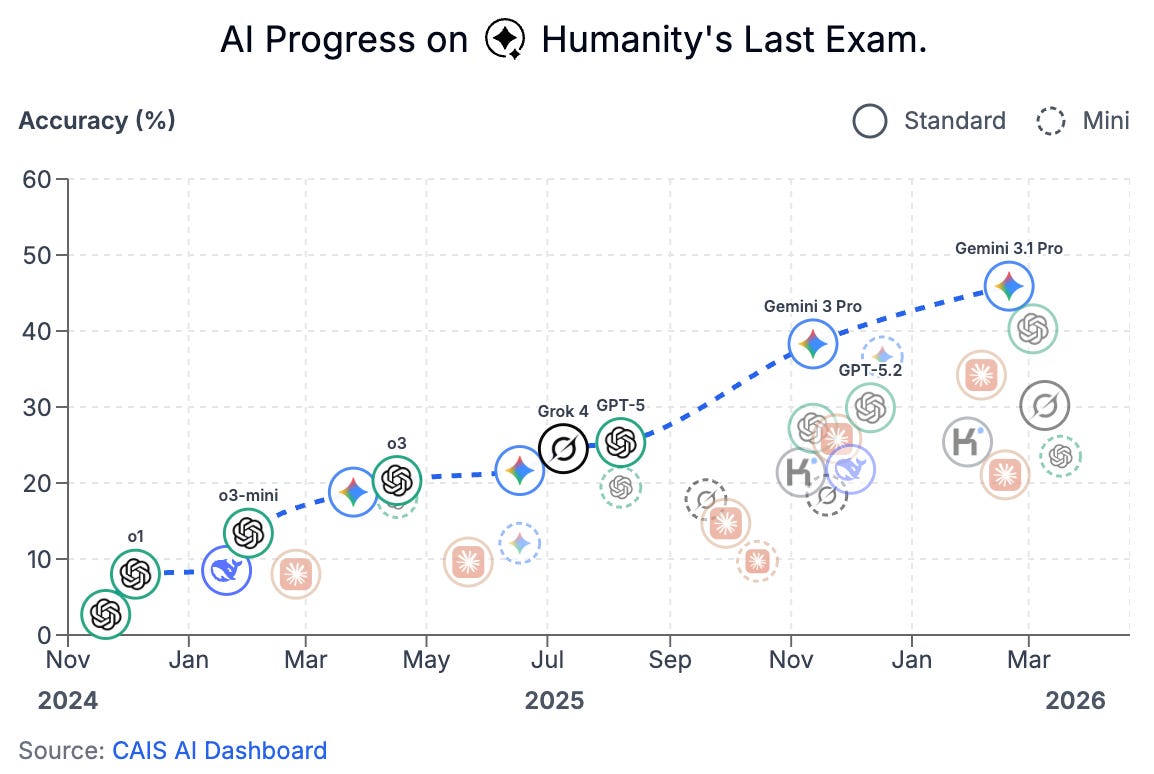

AI benchmarks are being demolished almost as quickly as they can be developed. Google’s Gemini 3.1 Pro AI now scores 45.9% on Humanity’s Last Exam. The best AIs of late 2024 scored only a few percent.

Humanity’s Last Exam is particularly notable, as it was designed to be especially difficult, with the problem that AIs are zooming through all the benchmarks we have in mind. It’s described by its creators as “a multi-modal benchmark at the frontier of human knowledge, designed to be the final closed-ended academic benchmark of its kind with broad subject coverage”.

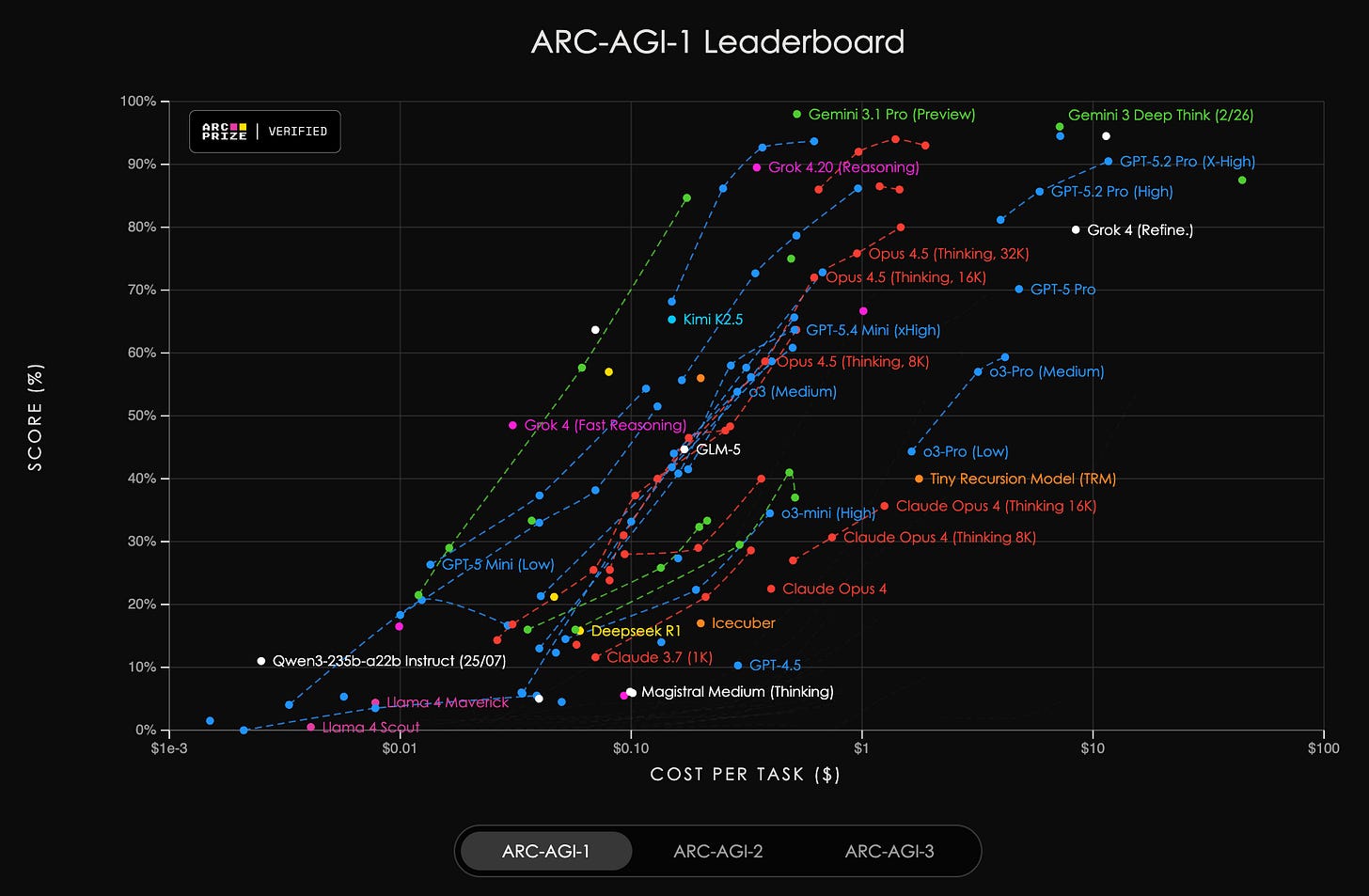

A similar trend is seen on the ARC-AGI-1 and ARC-AGI-2 leaderboards.

These benchmarks measure fluid intelligence, with AIs having to solve questions similar to those a human would be presented with in a non-verbal IQ test (like Raven’s Progressive Matrices). Initially, the best AIs didn’t perform well on these tests, which some took as evidence that AI was nowhere close to human-level intelligence. Now, the ARC Prize Foundation has had to develop a third, even harder, iteration of the benchmark.

But perhaps the most significant metric of AI capabilities is that of coding time horizons, which we wrote about earlier this month. AIs are now able to complete coding tasks that take humans half a day, and the length of tasks they can complete is growing exponentially, doubling every 4 months.

Domains like mathematics, and particularly coding, are especially relevant when it comes to the trajectory of AI development, since top AI companies are currently aiming to be able to replace their own researchers and engineers with AIs, which could massively accelerate the rate of AI progress, leading to a dangerous intelligence explosion.

What This All Means

Top AI companies like ChatGPT-maker OpenAI, Anthropic, Musk’s xAI, and Google DeepMind are racing at breakneck speed towards developing a form of AI called artificial superintelligence. This would be AI vastly smarter than humans; it would have the ability to replace humans individually, and across the board as a species. Anything a human can do, superintelligent AI could do more competently, faster, and cheaper.

Despite the clear and rapid progress towards that goal, as we’ve covered in this article, none of these companies have a credible plan to ensure that AIs so powerful would be safe or controllable. In fact, as we wrote about last week, they can’t really even ensure that today’s AIs are controllable, with a recent series of incidents of AI agents slipping out of control being reported.

The ability to ensure control remains one of the most pressing unsolved problems of the field, but the AI companies’ plan is that, essentially, they’ll get AIs to figure this out and hope and pray it works. This isn’t very encouraging. The UK AI Security Institute’s Chief Scientist, Geoffrey Irving, who used to lead safety teams at OpenAI and Google DeepMind, recently said in an interview that this plan is flawed and we can’t have much confidence in it working.

And what happens if they build superintelligent AI without being able to control it? Everyone could die.

If superintelligence is built without the ability to control it, we would be faced with an entity much more intelligent than ourselves that we don’t control. This is why hundreds of top AI scientists, including godfathers of the field Bengio and Hinton, have been warning that the development of this technology could lead to human extinction. Even the CEOs of these same companies working to develop it have publicly warned of this risk.

And if superintelligent AI has goals that differ from ours – and currently we know of no way to verify, let alone set, the goals of modern AIs – and doesn’t care not to destroy us, it could view us as a potential obstacle to eliminate, or might just transform the world around us in pursuit of its goals such that it is no longer habitable for humans. It seems uncertain that the optimal conditions for computer systems would be the same as they are for humans, for example.

The problem is clear: on the current trajectory we are on track to develop smarter-than-human AI that could evade our control, risking human extinction. The solution is similarly clear. When AI companies’ CEOs tell us the technology they’re building risks our own survival as a species, we can take them at their word and ban the development of this technology.

There are many benefits that can be attained from the use of narrow, specialized AIs, but superintelligence is simply too dangerous. At ControlAI we’re working to inform lawmakers and the public about the problem and get superintelligence banned. We hope you’ll join us in our efforts to advocate for this!

Take Action

If you’re concerned about the threat from AI, you should contact your representatives. You can find our contact tools here that let you write to them in as little as 17 seconds: https://campaign.controlai.com/take-action.

We also have a Discord you can join if you want to connect with others working on helping keep humanity in control, and we always appreciate any shares or comments — it really helps!

What happens when AI solves a "math" problem with a solution that is too complex for humans to comprehend? Should we just trust that the answer is correct?

CONTROL AND REGULATE AI AT ONCE!!!! NO MORE DAMN DEEP FAKES!!!!!