The Exponential

AIs are rapidly improving in their ability to code. The consequences of this could be huge.

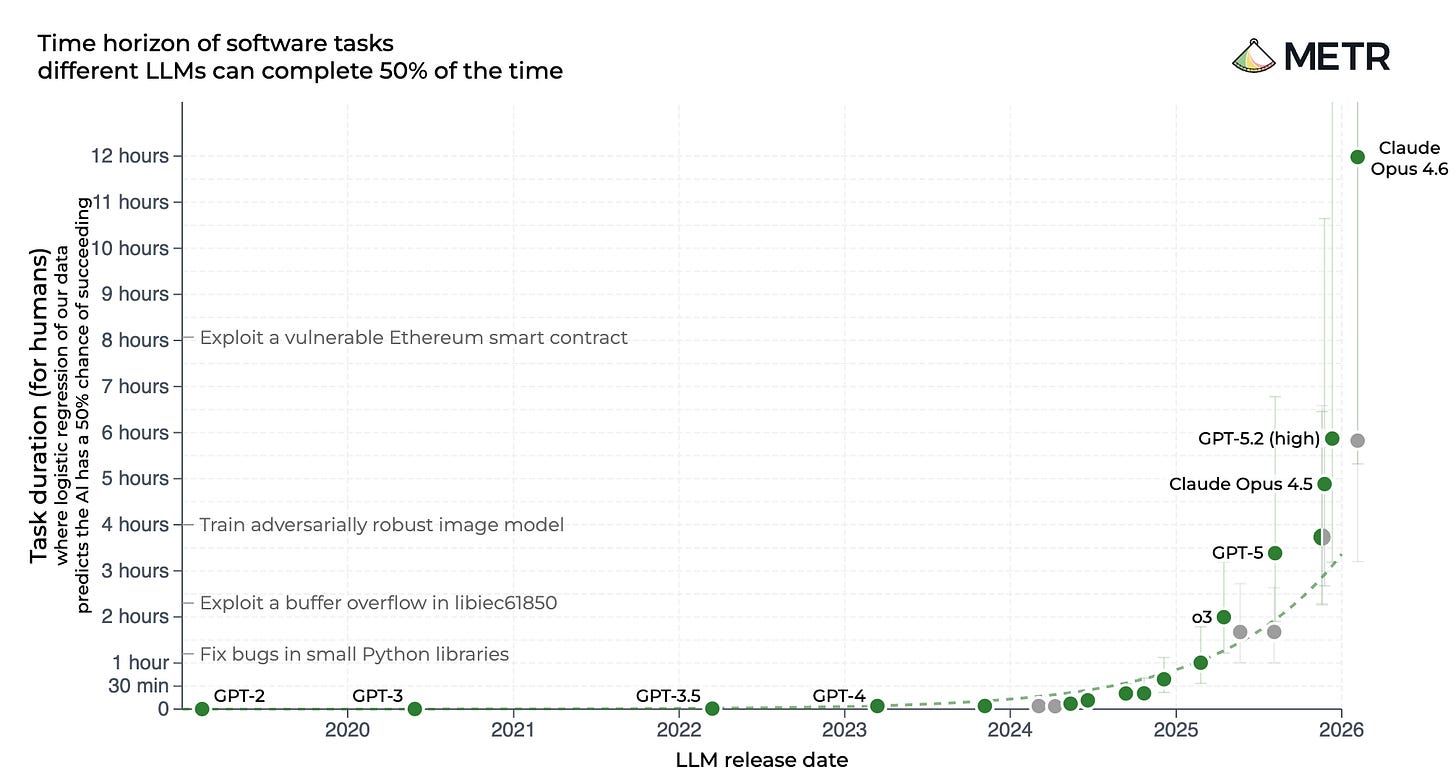

New tests confirm that the difficulty of coding tasks AIs can reliably complete is still doubling every 4 months, with AIs now able to complete tasks that take humans half a day. We’ll break down what this trend means, and why its potential consequences are so concerning. Plus: a brief digest of the week’s AI news!

If you find this article useful, we encourage you to share it with your friends! If you’re concerned about the threat posed by AI and want to do something about it, we also invite you to contact your lawmakers. We have tools that enable you to do this in as little as 17 seconds

Time Horizons

Over time and as new AIs are developed, the complexity or difficulty of tasks that AI systems can reliably complete is growing; AIs are becoming more intelligent and more capable.

A year ago, researchers at METR, an organization that studies AI capabilities found a way to measure this with the concept of an AI “time horizon”. Using the length of time it takes expert humans to complete a task, we can get a sense of how difficult it is.

Take the example of someone solving a difficult math problem. If it takes 5 hours to solve it, we’d say that task has a task length of 5 hours.

In publishing their research, METR showed that the “time horizons” of AIs on coding tasks have been growing exponentially, doubling every 7 months for the previous 6 years.

They did this by giving both skilled professionals and AI systems a suite of coding tasks. They measured how long each task took humans to complete, and then checked how often an AI could complete that task. As you’d expect, AIs tend to have an easier time on tasks that humans complete quickly, and have lower success rates on tasks that take humans longer.

METR was then able to use this relationship to find the point where an AI succeeds 50% of the time; that’s its “time horizon”. Then, by measuring the time horizon for many AIs released over the previous 6 years, they were able to see how the time horizon for the best AI at any time grew, producing what some have called “the graph”.

It’s important to underscore that the time horizon is a measurement of the difficulty of tasks that an AI can complete in relation to how long it takes humans to do them. In terms of how long it takes an AI to actually do those tasks, they can do them much faster.

We last wrote about time horizons when OpenAI released ChatGPT-5, which had a time horizon of 2 hours and 15 minutes. Now METR have tested Anthropic’s Claude Opus 4.6, and it’s 12 hours. This is a drastic increase, but it’s not unexpected. With the release of reasoning models in late 2024, a new trend has emerged, where AI’s time horizons aren’t doubling every 7 months; they’re now doubling every 4 months. Claude’s result tracks with this trend.

Why Coding Automation Matters

AI development is driven by two key inputs:

Physical resources, like the amount of compute that an AI is trained with, which depends on the amount and power of the chips companies can get access to. In recent years, AI companies have been scaling up the amount of compute that they train their AIs with by about 5X per year.

Algorithmic progress, which is the process of finding ways to develop AIs such that they are more compute efficient, allowing companies to develop even more capable AIs with the same amount of physical resources. This relies on the ability of AI companies to do research and implement experiments and such in code. In recent years, AI companies have been able to use algorithmic improvements to improve their compute efficiency by around 3X per year.

Scaling both of these inputs reliably leads to more capable AIs.

This trend of AIs rapidly getting better at coding is a huge deal because coding is a big part of AI R&D. The ability to fully automate coding could significantly speed up the AI R&D loop, and AIs are getting better at the ability to do research too, which would only compound this.

Top AI companies like Anthropic and OpenAI aren’t just trying to make their lives easier by automating their coders, or just trying to replace people in the job market, they’re explicitly aiming to develop artificial superintelligence — AI vastly smarter than humans, which would replace humans across the board. Countless top AI scientists, including godfathers of AI Yoshua Bengio and Geoffrey Hinton, and even the CEOs of these same AI companies building it have warned that superintelligence could lead to human extinction.

The path they’ve outlined to do this is clear. They plan to automate the development of ever more powerful AIs, and they believe they might be able to achieve this very soon. In October, OpenAI’s CEO Sam Altman stated this explicitly.

We have set internal goals of having an automated AI research intern by September of 2026 running on hundreds of thousands of GPUs, and a true automated AI researcher by March of 2028.

The plan is to get AIs to improve AIs, or recursively self-improve, initiating an intelligence explosion.

Building an entity more intelligent and more powerful than yourself is intrinsically dangerous, and AI companies don’t really even know how to control the AIs they’re developing today. Nobody knows how to ensure that superintelligent AI would be safe or controllable.

However, the path that AI companies have chosen of automating AI R&D to get there is particularly dangerous. Anthropic cofounder and chief scientist Jared Kaplan has said that recursive self-improvement and an intelligence explosion would be the “ultimate risk”. The process of an intelligence explosion would likely rapidly lead to superintelligent AI, which we have no way to control, and it could all happen so fast as to make it impossible for humans to have any meaningful oversight over it.

The speed at which it could happen is part of why it’s so dangerous, but this is exactly why AI companies are pursuing it. AI companies like OpenAI, Anthropic, xAI, and Google DeepMind are locked in a race to be the first to develop superintelligence. Despite the risks, which they are all aware of, with each of their CEOs having warned that superintelligence could cause human extinction, they’ve shown that they’re unwilling or unable to desist from this.

Importantly, this isn’t a long term risk that we can put to the back of our minds. Many experts believe superintelligence could arrive within just the next 5 years. In a recent interview, Anthropic’s CEO Dario Amodei said that we are near the end of the exponential.

What has been the most surprising thing is the lack of public recognition of how close we are to the end of the exponential. To me, it is absolutely wild that you have people — within the bubble and outside the bubble — talking about the same tired, old hot-button political issues, when we are near the end of the exponential.

Ajeya Cotra, who works on risk assessment at METR and came third out of over 400 participants in a forecasting competition on AI capabilities last year, commented on the new results. She now thinks AI time horizons will exceed 100 hours by the end of the year, and says she doesn’t “see solid evidence against AI R&D automation *this year*”, though she still thinks that it’s unlikely to happen this year.

Given the danger posed by superintelligence and the potential for this all to spin out of control very rapidly, we think it’s clear what must be done. Governments should ban the development of superintelligence, both within countries and internationally. This way we can avoid the threat of extinction that experts warn of.

That’s what we’re campaigning on, and we hope you’ll join us.

If you do want to help out, we have contact tools that enable you to contact your representatives in mere seconds. So far people have sent over 160,000 messages with these urging lawmakers to address the risk of extinction posed by superintelligence.

So far, 114 UK lawmakers have joined our call for binding regulation on the most powerful AI systems, recognizing the risk of extinction posed by superintelligent AI. Many MPs that support us have joined our campaign after hearing from constituents.

AI News Digest

Anthropic and the DoW

The Pentagon has designated Anthropic a supply chain risk, after the two failed to come to a deal over contractual language about the potential use of Anthropic’s AIs in fully autonomous weapons and domestic surveillance.

ChatGPT-5.4

OpenAI has released ChatGPT-5.4, their most capable AI to date.

Sanders

Senator Bernie Sanders (I-VT) spoke with some of the leading experts on AI about the risk that superintelligence could cause human extinction.

School shooting

OpenAI has adjusted some of their safety protocols, after a school shooting in Canada where the company reportedly didn’t alert authorities that the perpetrator had described violent intent to ChatGPT.

Take Action

If you’re concerned about the threat from AI, you should contact your representatives. You can find our contact tools here that let you write to them in as little as 17 seconds: https://campaign.controlai.com/take-action.

We also have a Discord you can join if you want to connect with others working on helping keep humanity in control, and we always appreciate any shares or comments — it really helps!

REGULATE AI RIGHT NOW!

Thank you for posting this. It concerns me.