How Would AI Take Control?

It could happen surprisingly soon, according to a recent scenario forecast.

Those of us working on AI safety are often asked: how exactly could artificial superintelligence take over?

A research team led by former OpenAI researcher and whistleblower Daniel Kokotajlo has sought to answer this question, writing a compelling and detailed scenario forecast backed by deep research to explain exactly how this could happen.

You can read their scenario here: https://ai-2027.com

Their team has a strong forecasting track record, with Kokotajlo having predicted the rise of AI chain-of-thought reasoning, inference compute scaling, AI chip export controls, and AI training runs that would cost more than $100 million, more than a year before the launch of ChatGPT. Another member of their team, Eli Lifland, won the 2022 RAND Forecasting Initiative (formerly INFER) forecasting competition.

What are the key takeaways from the scenario?

Artificial superintelligence could arrive soon

The authors predict that 2027 is the most likely year that superintelligence would be developed, with development accelerated by the automation of AI R&D.

The trajectory that AI 2027 outlines hinges on a critical moment in AI development, where AI systems are able to significantly speed up AI R&D, initially with a “superhuman coder”, where the leading AI company develops a system that can do any coding tasks that their best engineer can, while being much faster and cheaper.

At ControlAI, we’ve written about what could happen when AIs are improving AIs previously, in “From Intelligence Explosion to Extinction”.

The AI 2027 research supplements provide detailed forecasting and modeling on when this critical moment might occur:

In the scenario, once superhuman coders have been developed, the leading AI company, dubbed “OpenBrain”, turns to use them to accelerate AI R&D towards developing superhuman AI researchers, these differ from superhuman coders in that they are not only better than the best humans at coding, but they’re better than the best AI researchers at all AI research tasks.

The authors predict that superhuman coders will have an overall effect on AI R&D equivalent to a 5x progress multiplier, with superhuman AI researchers being developed just a few months later.

Superhuman AI researchers further accelerate AI R&D, with the authors estimating it corresponding with a 25x progress speedup, resulting in the rapid development of artificial superintelligence in expectation less than a year later.

What happens if artificial superintelligence is developed?

AI 2027 argues that superintelligence will dictate our future, not us. Millions of superintelligent agents would rapidly execute tasks beyond human comprehension. They’ll be so useful, that the authors expect they’ll be widely deployed, with superhuman strategy, hacking, and weapons development.

We’re not ready

Artificial superintelligence could develop adversarial goals, leading to human disempowerment by AI. In their AI goals forecasting supplement Daniel Kokotajlo discusses how superintelligent AIs might develop goals that are incompatible with human flourishing. In the scenario forecast, humans voluntarily give power to superintelligent AIs that they wrongly believe are under their control. Everything seems to be going fine, until it’s too late.

The scenario also serves as a warning on two critical issues:

Artificial superintelligence could lead to a seizure of total power over the future of humanity by an individual or small group.

A US-China race towards superintelligence could lead to cutting corners on safety, increasing the likelihood of a disastrous outcome.

What do we do about it?

The authors of AI 2027 say they’ll publish policy recommendations in the future.

At ControlAI, we’re concerned about the threat from superintelligence. AI could result in human extinction, something that has been warned of by Nobel Prize winners, hundreds of leading AI scientists, and even the CEOs of the top AI companies themselves.

We’ve developed concrete policy proposals in A Narrow Path, our plan for humanity to survive AI and flourish.

These include a defense in depth approach, combining compute-based thresholds with novel measures that directly tackle dangerous AI capabilities, and a comprehensive international framework for AI governance designed to prevent a competitive arms race and ensure cooperation. We’ve developed measures that should be implemented both domestically and internationally.

Getting the policies needed to address the threat from superintelligence enacted is a separate problem, and last week we published the Direct Institutional Plan.

The Direct Institutional Plan (DIP) is the most direct way to achieve this, going through the institutions that power our societies. It engages not only lawmakers, but also governments, media, and civil society.

Crucially, it’s a collaborative effort that any citizen or organization can implement independently. We’ve provided detailed policy briefs and tools to make getting in touch with your representatives quick and easy.

Other news we’ve been following

AB 501

A bill has been introduced into the California State Legislature, aiming to prevent OpenAI from converting from a non-profit to a for-profit company. OpenAI’s mission is officially to develop AGI for the benefit of humanity. Recent moves to attempt to change their status have been the subject of significant criticism, and even legal action by Elon Musk, one of its co-founders.

The bill, AB 501, has received significant support, with AI experts Yann LeCun, Stuart Russell, Gary Marcus, and legal scholar Lawrence Lessig, signing an open letter in support of the bill.

Gary Marcus wrote in a statement: “OpenAI has made a mockery of nonprofit status for years. They should not be allowed to do so, and should not set a precedent for others to do the same.”

OpenAI fundraising

OpenAI has closed a $40 billion funding round, which is the most amount ever raised by a private tech company. The deal values OpenAI at $300 billion, with SoftBank leading the round with $30 billion.

Last October, SoftBank’s CEO Masayoshi Son said that artificial superintelligence will exist by 2035.

Notably, the round comes with a condition: SoftBank has said that they might cut their investment down to $20 billion if OpenAI doesn’t convert into a for-profit company by the end of the year.

Dan Hendrycks warns against an AI Manhattan Project

In an article in The Economist, Dan Hendrycks makes a strong case that an AI Manhattan Project would be impractical, destabilizing, and self-defeating.

Hendrycks makes three core points:

It would require unattainable levels of secrecy.

It could break nuclear deterrence, since with artificial superintelligence superweapons could be built that would “blast a hole in existing deterrence strategies”.

We could lose control of AI. Hendrycks observes that the most direct route to superintelligence would be through what he terms “intelligence recursion”, which is when AI systems automate their own development. He notes that such a route is “unlikely to be reliably controllable”.

Hendrycks adds that regardless of whether the US loses control of AI, China would view an AI Manhattan Project as a threat to its own survival, and take countermeasures, “stepping up espionage, cyber-sabotage, and other pre-emptive attacks on AI infrastructure”.

We’ve previously written about the folly of pursuing an international AI race in “A Race We All Lose”.

Update from ControlAI

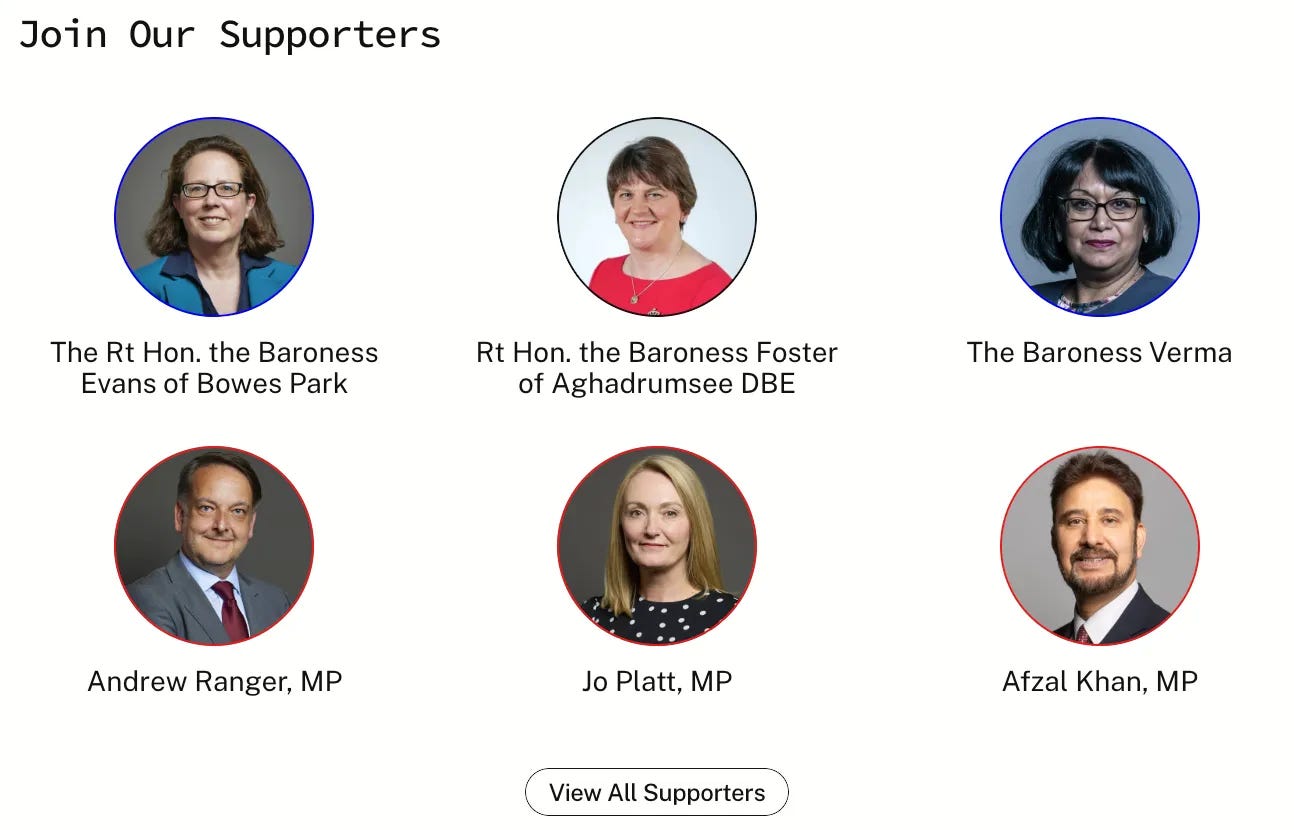

Our team has been continuing to meet with UK politicians as part of our ongoing campaign, and we’ve been maintaining a steady stream of new supporters signing up. We’re happy to announce that we’ve now reached 30 UK politicians backing our campaign for binding regulations on powerful AI, acknowledging the extinction threat it poses.

See you next week!

People must be allowed to earn a living, AI with Robotics can do ANY job a human can do and will unless we limit AI by law to a small percentage of the workforce!

Once you’ve let the cat out of the back, there is no return! AI is extremely dangerous, and will know that humans are not the best thing for our planet! Once you let the cat out of the box, there is no return! AI must never become smarter than humans! They will see us as dangerous to the planet and get rid of us! 😡😱😰