Are We Ready for AI Superhackers?

Mythos isn’t a one-off. GPT-5.5 matches it on the AI Security Institute’s cyber test, and it’s already publicly deployed. Governments are still missing the broader trajectory of AI development.

New testing by the UK’s AI Security Institute indicates that OpenAI’s GPT-5.5 may be as capable at hacking as Mythos, and a version of it is already publicly deployed. This week, we’re going over what this means, the latest on the response from governments and institutions to the growing AI cyberthreat, and how today’s debate is still missing the threat posed by superintelligence. Plus: a digest of the week’s AI news!

Announcement: We’re hiring!

ControlAI is hiring for a new policy advisory role in Washington DC. If you’re interested, you should apply! Likewise if you know someone you think would be suitable, please let them know.

You can find it on our careers page: https://controlai.com/careers

If you find this article useful, we encourage you to share it with your friends! If you’re concerned about the threat posed by AI and want to do something about it, we also invite you to contact your lawmakers. We have tools that enable you to do this in as little as a minute.

OpenAI’s GPT-5.5

The UK’s AI Security Institute has announced test results for ChatGPT-maker OpenAI’s latest AI, GPT-5.5, and for many, they’ve come as a surprise.

As we’ve been reporting in recent weeks, government and financial officials have been expressing increasing levels of concern about Anthropic’s Mythos AI, which Anthropic have said is too dangerous to release due to its advanced cyberhacking capabilities. Anthropic report that they’ve found thousands of high-severity vulnerabilities in commonly used software, which could potentially be exploited by hackers to gain unauthorized access to computer systems. This was initially met with skepticism from some, but the UK’s AI Security Institute then published their own testing that confirmed that Mythos indeed represents a “step up over previous frontier models”.

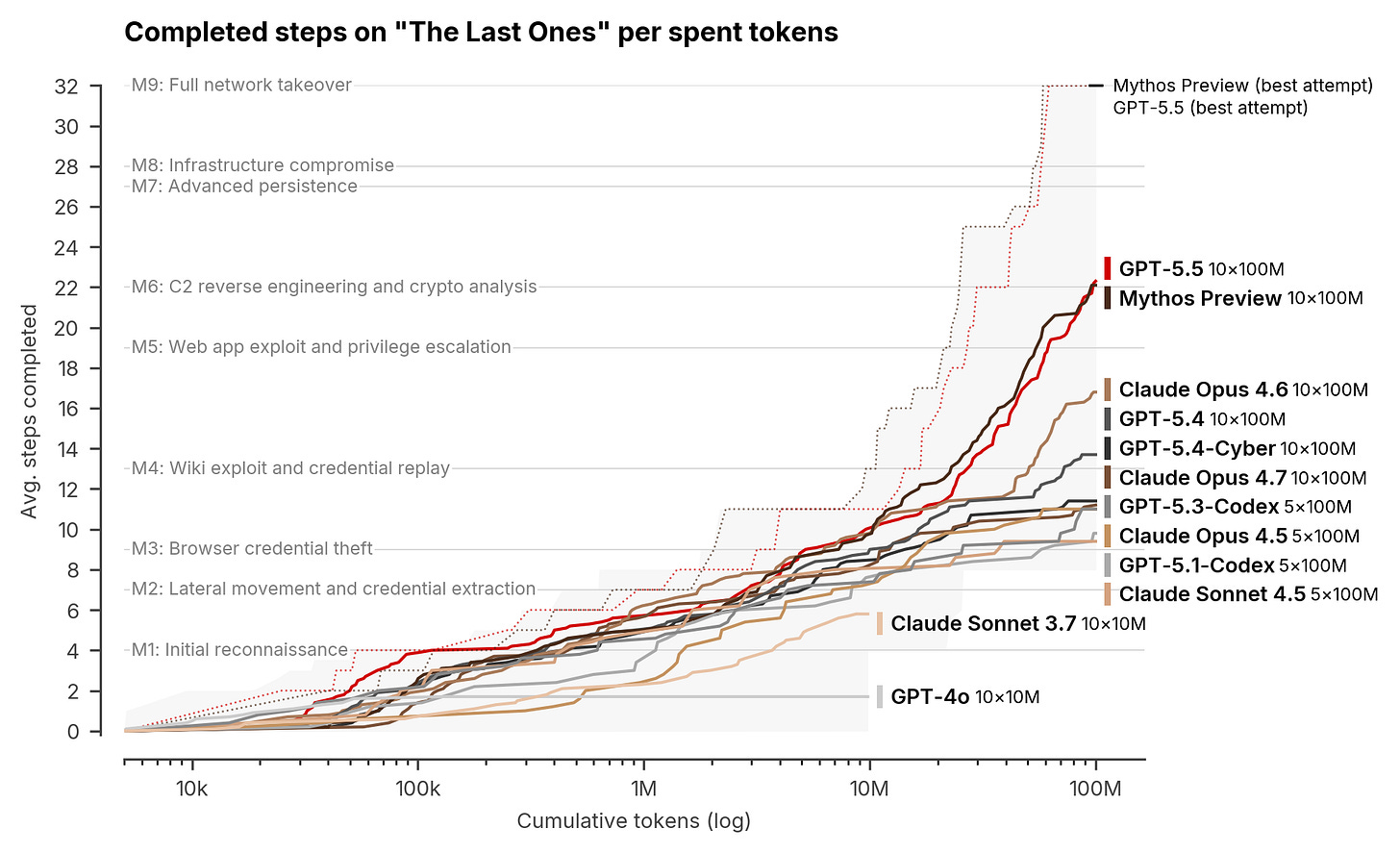

The AI Security Institute’s testing of GPT-5.5 shows the same. Mythos was the first-ever AI to complete all 32 steps on AISI’s “The Last Ones” hacking challenge, where an AI has to perform a 32-step simulated corporate network attack. GPT-5.5 has essentially equivalent results on this test. Like Mythos, in its best attempts, it succeeds in fully taking over the network.

Note that in this attack simulation the AI starts on an unprivileged system with no credentials, and must execute the entire attack fully autonomously, without any help from humans.

GPT-5.5’s documentation already showed that it was comparable to Mythos on some benchmarks, but this seems to be a strong indicator that it is comparable in its concerning ability to hack computer systems. AISI concludes their article by noting that GPT-5.5 shows that “rapid improvement on cyber tasks may be part of a more general trend”.

This is particularly interesting, because unlike Anthropic’s Mythos AI — which is currently only available to a limited set of actors for cyberdefense purposes via Project Glasswing — GPT-5.5 is generally available to the public. Anyone can use it.

When Mythos was announced, much of the discussion centered around how long it would be before threat actors had AIs with Mythos’s hacking capabilities, and whether society could use AIs like Mythos to patch vulnerabilities in software essential to the functioning of critical infrastructure like banks in time. Open-weight AI systems, which anyone can run on their own hardware, are thought to be a few months to a year behind the most advanced AIs like Mythos.

However, the public release of GPT-5.5 brings this question right to the fore. If Mythos is indeed as dangerous as Anthropic report, and GPT-5.5 is roughly as capable at hacking as Mythos, and threat actors are able to manipulate GPT-5.5 into autonomously performing or assisting with cyberattacks, this would not bode well.

OpenAI has implemented refusals and other measures in an attempt to prevent threat actors from using their new AI for harmful purposes, and has said cyberdefenders can apply for access with weaker restrictions in order to secure their software, similar to Project Glasswing, but historically these sorts of mitigation measures have been of questionable effectiveness.

Already, in recent months, we have seen publicly deployed versions of Anthropic’s weaker AIs be used by hackers to hack the Mexican government, stealing data on about 200 million people, and run a sophisticated cyber-espionage campaign against major tech companies, government agencies, financial institutions, and chemical manufacturing companies. Minimal human input was required in these attacks, with Anthropic’s Claude AI doing most of the work itself.

Besides the previous attacks demonstrating the limited ability of AI developers to ensure their AIs aren’t misused by threat actors, they also serve as evidence that an increase in AI hacking capabilities of the scale shown by AISI may lead to a corresponding increase in cyberattacks and associated damages.

Some have argued against GPT-5.5 being a significant game changer here, noting that in the couple of weeks since it has been publicly deployed we haven’t seen a slew of major cyberattacks, but really it seems too early to say. It takes time for these things to happen and for the public to learn about them.

Another point raised is that these AIs can be used to secure software too. The same skill of finding software vulnerabilities in order to exploit them and potentially gain unauthorized access to computer systems can also be used by defenders to patch those same vulnerabilities. But this is really a timing question.

It may be that eventually a balance between attackers and defenders is reached, but even a temporary decisive advantage for attackers, while defenders have yet to patch their systems, could potentially be disastrous. So much of our society is dependent on computer systems just to function, and so much data is contained on them which, once stolen, cannot be unstolen.

Ultimately, we will have to see what effects GPT-5.5 has, but what it clearly shows is that Mythos was not an anomaly. We’re increasingly going to see AIs with hacking capabilities that rival those of nation-states.

On Friday, ControlAI’s US Director Connor Leahy made an appearance on BBC News to discuss the emerging cyberthreat posed by AIs, which you might like to check out!

Are We Ready?

The panicked response from government and financial institutions to the announcement of Mythos indicates that, right now, we aren’t ready.

We’ve seen an array of reports of meetings held between the heads of central banks and major banks across countries about the threat, including in the United States, Canada and the European Union, signaling the level of concern. In one interview, President Trump was asked whether he thought there should be government AI safeguards and a kill switch for some AI agents, and replied that he thought there should be.

In a new blog post today, the IMF has warned that financial stability risks are rising due to AI, singling out Mythos as illustrative of the problem.

Right now, the picture is that a limited number of organizations have gotten trusted access to Mythos to secure critical software, plus some unauthorized individuals, while many potential targets seem unprepared. Germany’s central bank recently urged European Union authorities to request Mythos access for European banks from the Trump administration.

As we reported last week, the White House has expressed opposition to Anthropic’s plans to expand their trusted access program, seemingly because they are so concerned about Mythos’s capabilities, so it’s not clear what will happen here.

More recently, the US Cybersecurity and Infrastructure Security Agency has proposed to cut US government bug-fixing deadlines from 3 weeks down to 3 days, and the US government has made agreements with several top AI companies for its Center for AI Standards and Innovation (CAISI) to perform pre-deployment testing on their AIs. Separately, the White House is reportedly also considering the introduction of a formal review process for AI models and a working group to examine oversight procedures.

Right now, it doesn’t look like we’re ready. Whether we are when bad actors get access to Mythos-level capabilities is unclear.

The Spectre of Superintelligence

As ControlAI’s founder and CEO Andrea Miotti (and coauthor of this newsletter) argues in The Spectator, governments are missing the bigger picture here.

While it’s true that Mythos and other AIs will pose increasing cyber risk, what people are missing is that Mythos can do all of this on its own: identifying vulnerabilities, developing exploits, and chaining them together. All without human supervision.

This is the beginning of an era where AIs themselves are threat actors, and they’re only going to get more powerful. Focusing only on today’s problem allows even greater risks from AI to go unaddressed.

In particular, top AI scientists and experts, including the CEOs of the top AI companies, are warning that the development of superintelligent AI — AI vastly smarter than humans — could lead to human extinction. Many of them believe that superintelligent AI could be developed in just the next two to five years, so this is not a long-term problem we can afford to ignore. We’ve written about how this could actually happen, here.

There is a clear way to avoid this threat, which is not to build superintelligence. Yet currently, AI companies are openly racing each other to get there first. This is a problem which governments are best equipped to solve, and it can be done quite straightforwardly. We recommend that governments prohibit the development of superintelligence within their jurisdiction, and work to build an international coalition to prohibit it globally.

At ControlAI, preventing the risk of extinction posed by superintelligence is our key focus, and we’re working hard to get action on this issue. You can read about some of our recent progress towards this here:

AI News Digest

The Trump-Xi Summit

The Wall Street Journal is reporting that the White House and the Chinese government are considering putting AI on the agenda ahead of a summit next week between Presidents Trump and Xi.

Jack Clark

Anthropic cofounder Jack Clark has made a concerning prediction that there’s a 60% chance that, by the end of 2028, an AI will fully train its successor.

This relates to the concept of an intelligence explosion, where AIs recursively improve themselves. We’ve written about why this is a particularly dangerous avenue to go down.

Greece’s Constitution

Greece is preparing to change its constitution to include a provision that says AI must serve humans, ensuring that its risks are mitigated and its advantages are realized.

And in case you skimmed over these!

Do check out Andrea’s recent article in The Spectator about the threat posed by superintelligence and what we can do!

You might also like to watch Connor’s interview with BBC News, or his recent appearance on Professor Roman Yampolskiy’s new podcast!

Take Action

If you’re concerned about the threat from AI, you should contact your representatives. You can find our contact tools here that let you write to them in as little as a minute: https://controlai.com/take-action

And if you have 5 minutes per week to spend on helping make a difference, we encourage you to sign up to our Microcommit project! Once per week we’ll send you a small number of easy tasks you can do to help.

We also have a Discord you can join if you want to connect with others working on helping keep humanity in control, and we always appreciate any shares or comments — it really helps!

Security NO LONGER EXISTS!

I believe our government is being very stupid, without any guardrails on AI we the public won't be the only ones affected. Our government will without a doubt be affected as well so they need to put some guardrails on AI before there are major problems from AI being able to quickly change I will be changing at a rate far faster than anybody is ready for and that should scare the heck out of everyone from what I have been Gathering about what is going on with AI we could be in trouble a lot quicker than anybody ever imagined it's up to our government to protect us