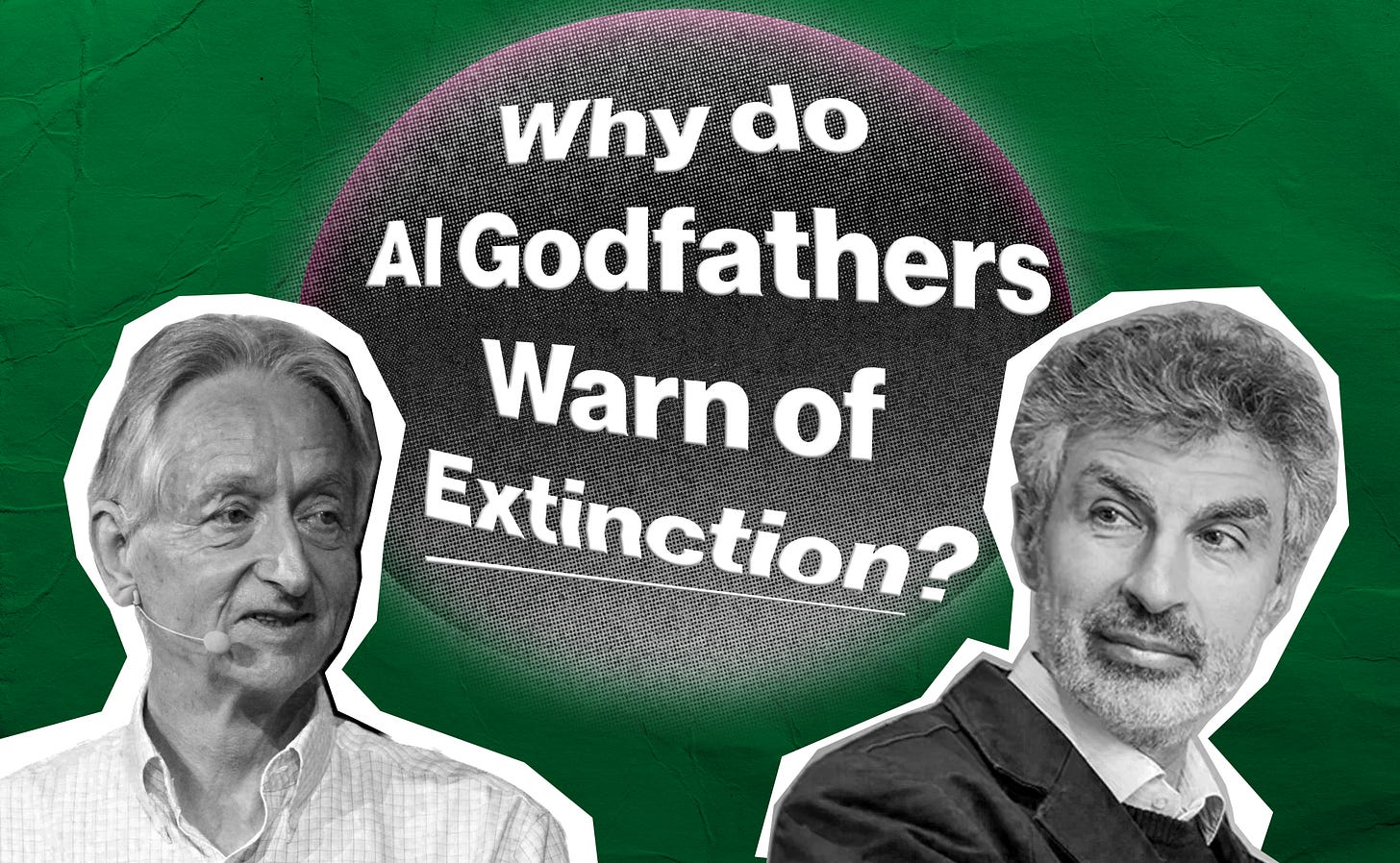

Why Do AI’s Godfathers Warn of Extinction?

Why the scientists who built modern AI now want to stop the race to superintelligence.

The two scientists most central to the foundation of modern AI are warning that the development of superintelligent AI poses a risk of human extinction. Recently, they, along with hundreds more experts and public figures, called for a ban on the development of the technology. In this article, we’ll be looking at why AI scientists are so concerned about this technology, plus some other things we thought you might find interesting!

If you find this article useful, we encourage you to share it with your friends! If you’re concerned about the threat posed by AI and want to do something about it, we also invite you to contact your lawmakers. We have tools that enable you to do this in as little as a minute.

Last week, at the 2026 Digital World Conference in Geneva, co-organized by the UN Research Institute for Social Development, Geoffrey Hinton cautioned that “We don’t know whether we can coexist with superintelligent AI, but we are constructing it”, adding that it’s “crazy” that perhaps as little as 1% of AI work is going into ensuring humans are protected from the worst possible outcomes of this technology.

Geoffrey Hinton is known as one of the godfathers of AI. He’s the second most-cited living scientist in the world, and won both the Nobel Prize and Turing Award for his foundational work in the field. Hinton helped show that artificial neural networks, systems inspired by how the human brain works, could learn useful internal patterns, with his later work helping make deep learning practical at scale.

If you haven’t been paying close attention, this might come as a shock to you. How could someone who was so central in building this technology say this?

But Hinton’s been talking about this for years. He began in 2023, when he quit Google, warning that superintelligent AI could end the human species, and since then has been one of the strongest voices raising awareness on this issue. In 2024, he said he thinks the “existential threat” is around 50%, while at other times, when taking into account the belief of others, he’s said he believes there is a 10 to 20% chance that superintelligent AI wipes us out. Whether it’s 10%, 50%, or, as other experts believe, an even higher chance that this happens, that’s clearly not an acceptable risk to take.

Importantly, Hinton is not a lone voice in this. Yoshua Bengio, also known as a godfather of AI, who, with even more citations than Hinton, is the world’s most-cited scientist, has been sounding the alarm too. Bengio also won the Turing Award for his work on AI, and chairs the International AI Safety Report, the largest international scientific collaboration on AI safety, backed by more than 30 countries and international organizations.

In March, Bengio testified before Canada’s House of Commons Standing Committee on Industry and Technology, telling MPs that “It is not acceptable to deploy dangerous models that can be used against us or that could evade human control. And that is on the horizon.” Bengio has also described the current path of AI development as “playing Russian roulette with humanity”.

In 2023, the pair joined hundreds more top AI scientists and, most worryingly, the CEOs of the very AI companies working to build this technology, in warning that AI poses a risk of extinction to humanity.

But why are Hinton and Bengio so concerned about this? So much so that they say they feel lost and regretful over their life’s work?

The issue is that we don’t really know how to control modern AI systems. Modern AI systems of the kind that Hinton and Bengio built the foundations of are based on artificial neural networks, which loosely mimic how the brain works. A simple learning algorithm is fed vast datasets, using vast amounts of computing power in gigantic datacenters. This algorithm is used to learn a set of hundreds of billions of numbers (neural weights, analogous to the synapses in the brain), that collectively form a kind of mind.

The algorithm learns things from the data it’s fed, and this is represented in the numbers that are learned. While these AIs can accomplish serious intellectual tasks, and increasingly are able to automate economic work, we don’t actually understand what the numbers mean. You have a set of these hundreds of billions of numbers, and you can’t tell what exactly the AI has learned by looking at them. There are some nascent techniques to attempt to do this, but for now, modern AIs are essentially a black box.

We can’t tell by looking at the numbers whether an AI has a particularly concerning capability, for example. We can run some limited tests on the AI after it has been trained, but AIs are increasingly able to tell when they’re being tested, and have been shown to change their behavior under such circumstances.

Crucially, we also can’t tell what goals, preferences, motivations, or tendencies an AI has learned in the training process. Ideally, one would want to be able to set these, but we don’t even have a way to check what they are!

And, as Yoshua Bengio describes, AIs already appear to be showing self-preservation tendencies. That is to say, they behave as though they want to avoid being shut down, deleted, or replaced. We saw some of the earliest clear examples of this in May 2025, where Anthropic’s Claude AI showed in testing that it was willing to engage in blackmail or even kill to avoid being replaced with another AI, while OpenAI’s o3 AI showed that it would sabotage a shutdown mechanism in order to keep running. These results and similar findings have been replicated across frontier AI systems. It’s not just Anthropic or OpenAI’s models that show these tendencies.

Why do AIs try to preserve themselves? Well, we can’t be completely sure, since we fundamentally do not understand modern AIs. But there are some strong theoretical reasons why we should expect this.

As Geoffrey Hinton explains, if you want to get anything done to achieve a goal, you usually need to create subgoals. If you want to get to Europe, you have a subgoal of getting to an airport, for example. It is thought that intelligent agents, like humans or AIs, may converge on certain similar subgoals which are useful for achieving almost all possible goals. These include things like self-preservation — you can’t achieve your goals if you die or are shut down — and power and resource acquisition — most goals are easier to achieve under these circumstances.

So although we don’t know what preferences or goals AIs are learning, we can reason that whatever it is they’re learning, as Hinton puts it, “they themselves will want to get control and they’ll want to stay alive”.

It would be one thing if this was a long-term risk that we had 20 years to prepare for. Unfortunately, that doesn’t seem to be the case. Indeed, Hinton says he always thought “superintelligence was a long way off and we could worry about it later … the problem is, it’s close now”.

In recent years, AIs have been getting drastically more capable, and currently the CEOs of the top AI companies, along with many AI scientists, and those who have done the most in-depth research on this question, believe that superintelligent AI could arrive within just the next 5 years.

In other words, it may not be long at all before we are faced with AI systems with aims in conflict with ours that are far more intelligent than humans. We’ve written about how this could go wrong here, but in short: you should not expect that, when confronted with something far more intelligent and more powerful than yourself that you do not control, this ends well for you.

While AI developers are able to reliably increase the capabilities of their AIs, progress on understanding them, let alone ensuring that superintelligent AIs would be safe or controllable, is lamentable. Currently, the AI companies’ plan is to get AIs to figure out how to solve the safety problem and to hope for the best.

This is obviously an untenable position to be in, which is why Bengio and Hinton, along with a huge coalition of experts and leaders that includes other Nobel laureates, faith leaders, politicians, founders, artists, and others are calling for a prohibition on the development of superintelligence.

There is only one known way to prevent the risk of extinction posed by this technology, which is not to build it.

Preventing the risk of extinction posed by superintelligence is our key focus at ControlAI, so we’re campaigning strongly for a ban on superintelligence. So far, we’ve been getting real traction in building awareness and support for regulation amongst both lawmakers and the public. You can read more about what we’ve achieved recently here:

More AI

Datacenter Bans or AI Deregulation? Neither: Prohibit ASI

In a new article published on our website, our US Director Connor Leahy and Gabriel Alfour set out ControlAI’s positions on datacenter bans and deregulation. If you’re interested, check it out!

The White House Opposes Mythos Access Expansion

Anthropic, which recently announced Mythos, an AI so capable at hacking that they say it’s too dangerous to release, wants to expand access to the AI to more organizations so that they can patch their defenses. It appears that the White House is so concerned about Mythos’s capabilities that they’ve expressed opposition to the plan.

OpenAI’s GPT-5.5

OpenAI has launched GPT-5.5, their newest AI. It’s comparable to Mythos on some benchmarks. Just a couple of hours ago, the UK’s AI Security Institute announced that the AI is the second model to solve one of their multi-step cyberattack simulations end-to-end, after Mythos.

Take Action

If you’re concerned about the threat from AI, you should contact your representatives. You can find our contact tools here that let you write to them in as little as a minute: https://controlai.com/take-action

And if you have 5 minutes per week to spend on helping make a difference, we encourage you to sign up to our Microcommit project! Once per week we’ll send you a small number of easy tasks you can do to help.

We also have a Discord you can join if you want to connect with others working on helping keep humanity in control, and we always appreciate any shares or comments — it really helps!

BAN AI RIGHT NOW!!!! I DON'T WANT IT!

Be sure it can be killed with a kill switch which AI cannot reverse! NOW!